Matrox Imaging Matrox Imaging Library X Specifications

Ranked Nr. 24 of 85 Robot Software

Matrox® Imaging Library (MIL) X1 is a comprehensive collection of software tools for developing machine vision, image analysis, and medical imaging applications. MIL X includes tools for every step in the process, from application feasibility to prototyping, through to development and ultimately deployment.

The software development kit (SDK) features interactive software and programming functions for image capture, processing, analysis, annotation, display, and archiving. These tools are designed to enhance productivity, thereby reducing the time and effort required to bring solutions to market.

Image capture, processing, and analysis operations have the accuracy and robustness needed to tackle the most demanding applications. These operations are also carefully optimized for speed to address the severe time constraints encountered in many applications.

MIL X at a glance

- Solve applications rather than develop underlying tools by leveraging a toolkit with a more than 25-year history of reliable performance

- Tackle applications with utmost confidence using field-proven tools for analyzing, classifying, locating, measuring, reading, and verifying

- Base analysis on monochrome and color 2D images as well as 3D profiles, depth maps, and point clouds

- Harness the full power of today’s hardware through optimizations exploiting SIMD, multi-core CPU, and multi-CPU technologies

- Support platforms ranging from smart cameras to high-performance computing (HPC) clusters via a single consistent and intuitive application programming interface (API)

- Obtain live data in different ways, with support for analog, Camera Link®, CoaXPress®, DisplayPort™, GenTL, GigE Vision®, HDMI™, SDI, and USB3 Vision®2 interfaces

- Maintain flexibility and choice by way of support 64-bit Windows® and Linux® along with Intel® and Arm® processor architectures

- Leverage available programming know-how with support for C, C++, C#, CPython, and Visual Basic® languages

- Experiment, prototype, and generate program code using MIL CoPilot interactive environment

- Increase productivity and reduce development costs with Matrox Vision Academy online and on-premises training.

First released in 1993, MIL has evolved to keep pace with and foresee emerging industry requirements. It was conceived with an easy-to-use, coherent API that has stood the test of time. MIL pioneered the concept of hardware independence with the same API for different image acquisition and processing platforms. A team of dedicated, highly skilled computer scientists, mathematicians, software engineers, and physicists continue to maintain and enhance MIL.

MIL is maintained and developed using industry recognized best practices, including peer review, user involvement, and daily builds. Users are asked to evaluate and report on new tools and enhancements, which strengthens and validates releases. Ongoing MIL development is integrated and tested as a whole on a daily basis.

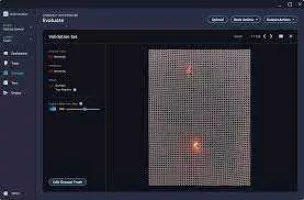

MIL SQA

In addition to the thorough manual testing performed prior to each release, MIL continuously undergoes automated testing during the course of its development. The automated validation suite—consisting of both systematic and random tests—verifies the accuracy, precision, robustness, and speed of image processing and analysis operations. Results, where applicable, are compared against those of previous releases to ensure that performance remains consistent. The automated validation suite runs continuously on hundreds of systems simultaneously, rapidly providing wide-ranging test coverage. The systematic tests are performed on a large database of images representing a broad sample of real-world applications.

Latest key additions and enhancements:

Simplified training for deep learning

New deep neural networks for classification and segmentation

New deep learning inference engine

Additional 3D processing operations including filters

3D blob analysis

3D shape finding

Hand-eye calibration for robot guidance

Improvements to SureDotOCR®

Makeover of CPython interface now with NumPy support

Field-proven vision tools

Image analysis and processing tools

Central to MIL X are tools for calibrating; classifying, enhancing, and transforming images; locating objects; extracting and measuring features; reading character strings; and decoding and verifying identification marks. These tools are carefully developed to provide outstanding performance and reliability, and can be used within a single computer system or distributed across several computer systems.

- Pattern recognition tools

- Shape finding tools

- Feature extraction and analysis tools

- Classification tools (using machine learning including deep learning)

- 1D and 2D measurement tools

- Color analysis tools

- Character recognition tools

- 1D and 2D code reading and verification tool

- Registration tools

- 2D calibration tool

- Image processing primitives tools

- Image compression and video encoding tool

- Tools fully optimized for speed

- 3D vision tools

- Distributed MIL X interface

MIL CoPilot interactive environment

Included with MIL X is MIL CoPilot, an interactive environment to facilitate and accelerate the evaluation and prototyping of an application. This includes configuring the settings or context of MIL X vision tools. The same environment can also initiate—and therefore shorten—the application development process through the generation of MIL X program code.

Running on 64-bit Windows, MIL CoPilot provides interactive access to MIL X processing and analysis operations via a familiar contextual ribbon menu design. It includes various utilities to study images and help determine the best analysis tools and settings for a given project. Also available are utilities to generate a custom encoded chessboard calibration target and edit images. Applied operations are recorded in an Operation List, which can be edited at any time, and can also take the form of an external script. An Object Browser keeps track of MIL X objects created during a session and gives convenient access to these at any moment. Non-image results are presented in tabular form and a table entry can be identified directly on the image. The annotation of results onto an image is also configurable. MIL CoPilot presents dedicated workspaces for training one of the supplied deep learning neural networks for Classification.These workspaces feature a simplified user interface that reveals only the functionality needed to accomplish the training task like an image label mask editor. Another specialized workspace is provided to batch-process images from an input to an output folder.

Once an operation sequence is established, it can be converted into functional program code in any language supported by MIL X. The program code can take the form of a command-line executable or dynamic link library (DLL); this can be packaged as a Visual Studio project, which in turn can be built without leaving MIL CoPilot. All work carried out in a session is saved as a workspace for future reference and sharing with colleagues.

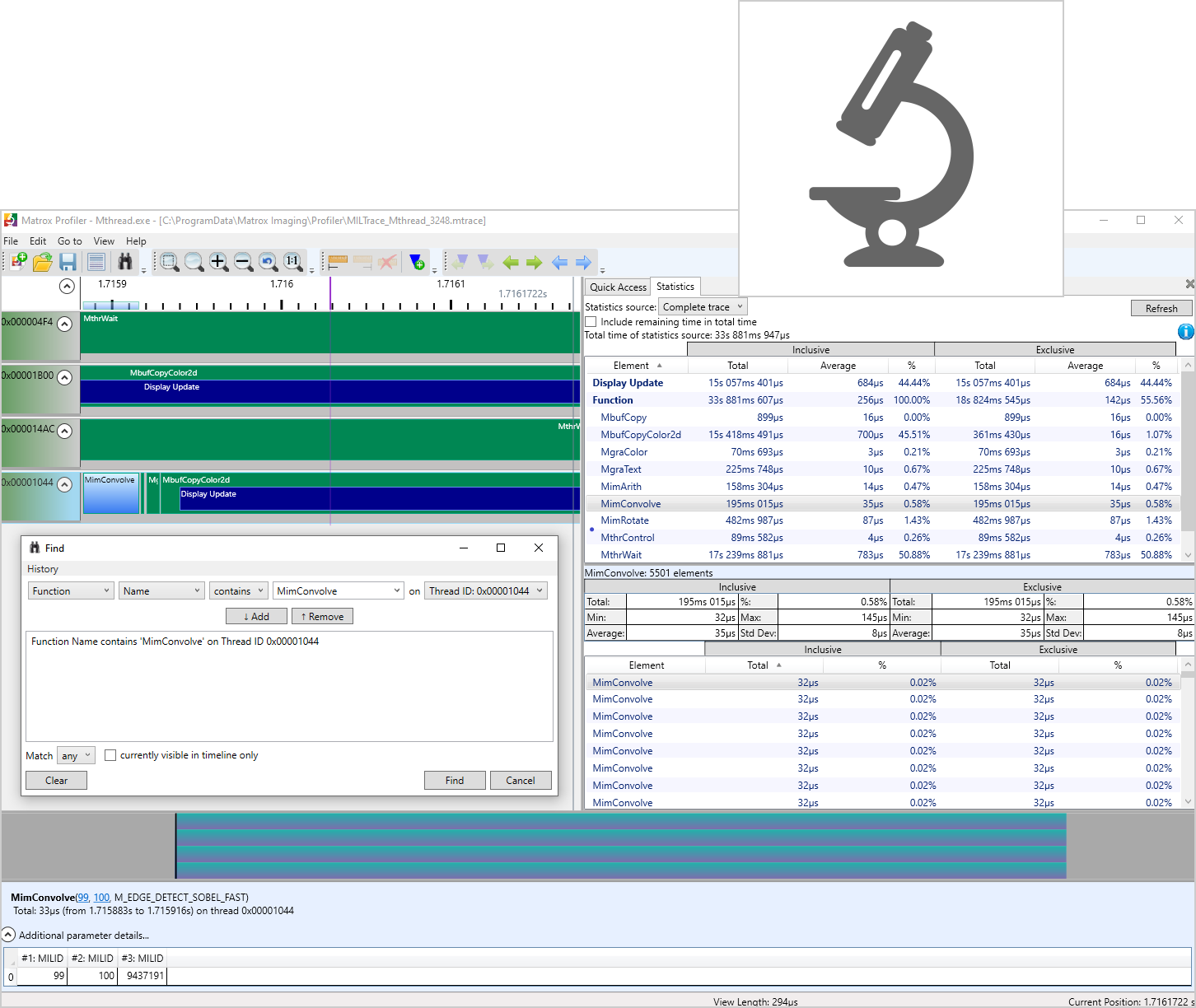

Matrox Profiler

Matrox Profiler is a Windows-based utility to post-analyze the execution of a multi-threaded application for performance bottlenecks and synchronization issues. It presents the function calls made over time per application thread on a navigable timeline. Matrox Profiler allows the searching for, and selecting of, specific function calls to see their parameters and execution times. It computes statistics on execution times and presents these on a per function basis. Matrox Profiler tracks not only MIL X functions but also suitably tagged user functions. Function tracing can be disabled altogether to safeguard the inner working of a deployed application.

Development features:

- Complete application development environment

- Portable API

- .NET development

- JIT compilation and scripting

- Simplified platform management

- Designed for multi-tasking

- Buffers and containers

- Saving and loading images

- Industrial and robot communication

- WebSocket access

- Flexible and dependable image capture

- Matrox Capture Works

- Simplified 2D image display

- Graphics, regions, and fixtures

- Native 3D display

- Application deployment

- Documentation, IDE integration, and examples

- MIL-Lite X

- Software architecture

| Software type |

|